|

| http://xkcd.com/1252/ |

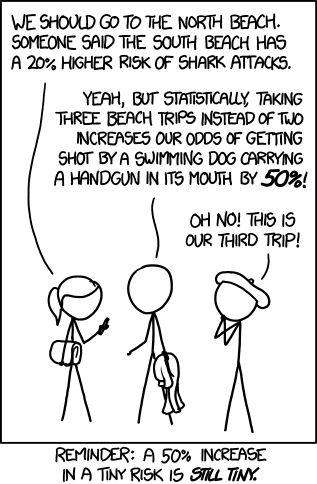

Two problems. XKCD perfectly describes one, which is that a relative risk increase is just about useless if you don't know the absolute risk. The other side of the problem: where do these risk values come from?

In 2001, Oxford Journals' International Journal of Epidemiology published "Epidemiology--is it time to call it a day?". Six years prior to that Science published "Epidemiology Faces Its Limits". So, 12-18 years later, have we gotten smarter about epidemiology?

Nope.

Maybe it's the amount of data or accessibility or ease of publishing or the disappearence of professional editing, but bad or trivial or meaningless stats and probabilities seem more common than ever. Our risk assessment skills seem just as bad as ever.

Two helpful guidelines for understanding any risk metric you see:

- assume it's not from a controlled trial (in other words, assume it's low quality)

- remember guidance from actual epidemiologists, from the 1995 article [emphasis mine, and keep in mind that, say, a "20% higher risk" is a relative risk of only 1.2]:

As a general rule of thumb," says Angell of the New England Journal, "we are looking for a relative risk of three or more [before accepting a paper for publication], particularly if it is biologically implausible or if it's a brand new finding." Robert Temple, director of drug evaluation at the Food and Drug Administration, puts it bluntly: "My basic rule is if the relative risk isn't at least three or four, forget it." But as John Bailar, an epidemiologist at McGill University and former statistical consultant for the NEJM, points out, there is no reliable way of identifying the dividing line. "If you see a 10-fold relative risk and it's replicated and it's a good study with biological backup, like we have with cigarettes and lung cancer, you can draw a strong inference," he says. "If it's a 1.5 relative risk, and it's only one study and even a very good one, you scratch your chin and say maybe.

No comments:

Post a Comment